INTRO

Hello, DTC Operators (and agency friends)!

This week: I put the three biggest AI image models through the wringer with a banana, some fake reviews, and a brand consistency test. Yes, a banana. I'll explain. The winner surprised me, and my 8-month relationship with ChatGPT might be over.

Slight detour: I wasn’t going to go two weeks without sending a newsletter out, so this one is hitting on Saturday. I figured with another winter storm hitting, maybe this will be something nice to read while you curl up with some coffee.

The reason for the delay, apart from working on my own DTC brand, is I’ve been going even deeper on a ton of AI tools. I know, you didn’t think that was even possible.

I Tested ChatGPT vs. Gemini vs. Grok So You Don't Have To

Everyone's been arguing about which AI image model is "best." Meanwhile, I've spent countless hours with Gemini’s Nano Banana, ChatGPT, and now 5 hours into the new Grok Imagine actually testing them on tasks that matter for DTC brands.

Here's the thing: most AI image comparisons are useless. They show you some cool dragons and abstract art. Or swapping stuff around an Obvi ad.

Great. Super helpful for my email campaigns about... fantasy creatures? What about something other than Obvi? The real question… how well can they edit your email header without turning your logo into a fever dream? That's what I wanted to know.

So I put ChatGPT Imagen, Gemini (specifically testing Nano Banana Pro), and the new Grok Imagine through four real tests that actually matter for DTC creative work. The results? Let's just say I have a new favorite. And my ChatGPT subscription is looking at me with sad puppy eyes. It’s sitting cancelled for its 3rd straight month.

My Testing Framework (And Why These Tests Actually Matter)

I ran each model through four specific tests:

The Banana Test (Can it do "box breaking" edits?)

The Review Text Test (Can it handle lots of text?)

The Brand Consistency Test (Does it preserve your product and logo?)

Let me break down what happened.

Test 1: The Banana Test

"Box breaking" is one of the most common design styles in high-end DTC emails. You know the look: a product that bleeds over the edge of a frame or breaks through a border. It's everywhere because it works. It also has been nearly impossible to get an AI model to replicate.

Why is it so hard? Because AI models want to respect boundaries. Literally. They're trained on millions of images where objects stay inside frames, so asking them to intentionally violate that rule breaks their little robot brains.

So here's my banana test: I take an email header that has a product breaking out of a box, and I ask the AI model to swap that product for a banana. Same position, same box-breaking effect.

Starting image:

The prompt I used (I know, I’m a genius prompter):

Change the product that says "111SKIN" on the right in the attached image to be a banana.

ChatGPT, I didn’t ask for a banana split.

Whether you provide the banana image or ask for it, the results are pretty consistent since LLMs are pretty familiar with what a banana looks like.

Grok Imagine: Nails it. Swapped the product for a banana and kept the box-breaking effect intact. First time I've seen a model do this consistently. I think Grok's newer architecture actually understands spatial relationships better, which is why it can intentionally break the "stay inside the lines" rule.

Gemini: Gets it right about 50% of the time. Sometimes the banana breaks the box beautifully. Sometimes it just... sits inside the box like it's afraid to leave. Gemini seems to flip a coin on whether to respect the boundary or break it.

ChatGPT Imagen: Gets it about 30% of the time. And even when it breaks the box, it decides to add an extra banana. Or move the original product somewhere else. Or do some other creative interpretation I definitely didn't ask for. ChatGPT's image model has a habit of "improving" your request. Sometimes that's great. Usually, when you want precision, it's not.

Test 2: The Review Test

If you've ever tried to get AI to generate an image with more than five words of text, you know this pain. Short phrases? Usually fine. Full sentences? That's where things get weird and I feel like I am reading a language out of Stargate SG-1.

Here's why: Image models don't actually "read" text the way you do. They're pattern matching. They've seen millions of images with text, so they kind of know what letters look like. But longer text means more chances for the pattern matching to go off the rails. It's like asking someone who learned English from street signs to write a paragraph.

My test: I take a review card that has a lot of text (a customer testimonial graphic), and I ask AI to update it to a different set of long text. Super common DTC task. You want to swap out one review for another without recreating the entire design.

This historically is the test that every model fails on.

Starting image:

The prompt I used

Change the text on this from "My husband and I have been taking it for months now and we both can see a huge improvement in our health. Our energy level improved. I also noticed that I sleep better during the night. This is great. Highly recommend." to "My sister and I have been using this for weeks and we both feel a total transformation in our skin. Our complexion looks radiant. I also found that my morning routine feels much more refreshing. This is amazing. Can't recommend enough."

Grok Imagine: Nailed it. And honestly, this one surprised me. The text was accurate, properly spaced, and readable. No alien language, no random letter substitutions. My guess? Grok was trained more recently with a bigger emphasis on text accuracy after everyone complained about this exact problem.

ChatGPT Imagen: Gets the text right... technically. But the spacing is wrong, the wrapping is weird, and the result looks like someone who doesn’t right left-to-right naturally. Not to mention it resized it into weird ways, and changed the colors. Accurate but unusable. It's giving "I can spell every word but I don't understand paragraphs."

Gemini: Does its normal thing where it turns your perfectly normal English text into what I can only describe as "Ancient Sumerian meets keyboard mash." Look, I love Gemini for a lot of things. Text just isn’t one of them. I've tested this dozens of times. Same result. Gemini's text rendering engine is just... different.

Test 3: The Brand Consistency Test

This is the one I care about most for DTC work. I ask the model to make an update to an image that has a brand's product and logo in it. Can they maintain consistency without regenerating the whole thing?

Why do some models fail this? Because many AI image editors treat every edit as a chance to "reimagine" the image. They see your edit request and think, "You know what would be even better? If I also fixed the lighting. And moved that product. And gave your logo a fresh new look." Nobody asked, AI. Nobody asked.

Starting image:

The prompt I used

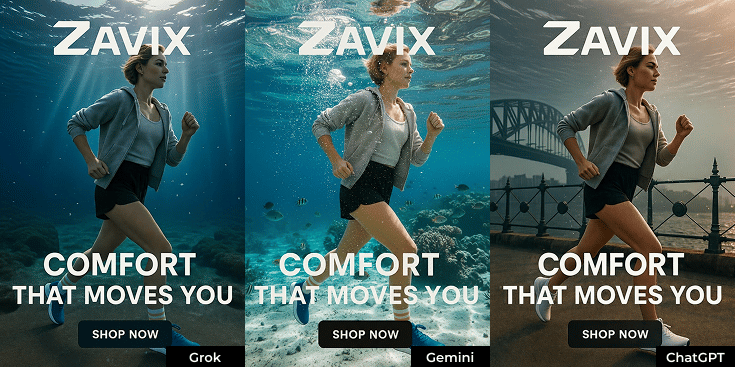

Change this so the woman is underwater. Keep the logo and shoes consistent.You can optionally add an "IMPORTANT" line if you run into consistency issues. It doesn’t always work, but it’s definitely increased my odds of success.

Grok Imagine: Nails it. Made my requested edit and left the product and logo completely alone. Grok seems to actually respect the "only change this one thing" instruction. Only downside on the Grok side is it frequently makes it feel a bit “AI generated” where Gemini feels more authentic.

Gemini: Also nails it. Gemini has historically been strong here, and that hasn't changed. It's good at surgical edits when you're specific. It honestly does the best job out of all of them, in my opinion.

ChatGPT Imagen: Gets it about 75% of the time. Every now and then it decides, "You know what? Let me just regenerate this entire image for you." Nobody asked for that, ChatGPT. It's like asking someone to hand you the salt and they decide to re-season the entire meal.

My Final Rankings

After all four tests, here's how they stack up:

1. Grok Imagine First model to pass all three precision tests (banana, text, brand consistency). The new king of AI image editing for DTC work. If you're doing email design, landing page updates, or any kind of asset editing, this is your new best friend.

2. Gemini Used to be my go-to. Still strong on brand consistency, but the text issues and 50/50 box breaking keep it in second place. Gemini, I still love you, but we need to see other people.

3. ChatGPT Imagen Pains me to say it because ChatGPT was the OG image generator... but for editing existing assets? It's been falling behind. Where it still wins: pure creative generation from scratch. If you need a concept made from nothing, ChatGPT is still the best starting point my opinion.

What My Workflow Looks Like Now

My workflow used to be Gemini for everything design related. When I needed something really creative, I'd use ChatGPT, then put it straight into Gemini to refine it. Two tools, constant back and forth.

This week I've been testing whether I can just do it all in Grok Imagine. So far? It's going really well. I'm getting the precision edits AND decent creativity from one tool. Grok is also so fast. Especially considering it generates 2 options for you every time.

My new workflow:

For pure creative work (generating new concepts from scratch): Start with ChatGPT

For everything else (editing, updating, brand consistency): Grok Imagine

Gemini: Still in the rotation for quick tests, but no longer my default

Pro tip: When using Grok Imagine, be explicit about what you DON'T want changed. The more specific your "leave this alone" instructions, the better your results.

If you're doing creative work using AI for your DTC brand, you owe it to yourself to spend 30 minutes with Grok's Imagine this week. Go run my banana test yourself. You'll see what I mean.

This Week’s Rabbit Holes

Clawdbot (look it up on X): Don't try this at home. But it's a glimpse into the world of super assistants that can control your entire computer. Wild stuff happening in the agentic space.

Cowork by Anthropic: If you missed it, you owe it to yourself to check it out. Desktop automation for non-technical folks. This is what "AI agents for normal people" is starting to look like.

Claude Apps: What super assistants will look like for everyone who doesn't code. The app ecosystem is getting interesting fast.

Free Meta Ad Analyzer Skill: I created a skill that tells you how likely your ad creative will perform in Meta's algorithm based on their own docs. No BS, just the actual criteria Meta uses. Grab it free from the link.

And that's it for this week's edition. ChatGPT still creates the prettiest pictures from scratch, but Grok just dethroned it for everything else. The image model wars have a new leader. It’s been 8-months since I’ve really used ChatGPT for image work… so I think our relationship is officially "it's complicated." And don’t forget to stop asking AI to add more than five words of text until you've tested it yourself first. Your brand deserves better than hieroglyphics.

Have you been liking these more in-depth emails, or did you prefer a little higher level from before? I’ve been loving all the emails you all have been sending, so let me know!